I’ve been running Tailscale across my Hetzner nodes and desktop for a while now. It works. It’s painless. You install the client, sign in, and your devices find each other — done. But “it works” and “I’m happy with it” aren’t the same thing, and lately I’ve been thinking harder about what Tailscale’s coordination server actually knows about my infrastructure and whether I could do better on privacy and performance.

Then Cloudflare dropped Mesh this week. NetBird keeps shipping features. Headscale hit a level of maturity that makes it genuinely viable. Suddenly there are four real options for private mesh networking, each with a fundamentally different philosophy about who controls what and each at different level of maturity.

This isn’t a “which one is best” article — that question is meaningless without context. Instead, I’m going to compare all four on equal footing: architecture, installation, features, performance, security, and operational burden. At the end, I’ll explain what I’m actually going to do with my own infrastructure and why.

The Four Contenders

Before diving into details, here’s the landscape at a glance. All four solve the same fundamental problem — connecting your devices into a private network — but they approach it from very different directions.

Tailscale is the incumbent. Cloud-hosted coordination server, peer-to-peer WireGuard data plane, polished experience. You trade some metadata visibility for zero operational burden. It just works.

Headscale is the lightest self-hosting path. It’s an open-source reimplementation of Tailscale’s coordination server — you replace only the control plane while keeping the official Tailscale clients. Single binary, narrow scope, minimal ops.

NetBird is the fully independent option. Own client, own management server, own signal server, own relay infrastructure. Everything is open source (Apache 2.0), everything is self-hostable. More moving parts, but zero dependency on Tailscale Inc. for anything.

Cloudflare Mesh is the new entrant, announced April 14, 2026. Edge-routed through Cloudflare’s global network rather than peer-to-peer. Zero self-hosting, deep integration with Cloudflare One, but all your mesh traffic passes through Cloudflare’s infrastructure.

| Tailscale | Headscale | NetBird | CF Mesh | |

|---|---|---|---|---|

| Philosophy | Convenience-first | Minimal self-hosting | Maximum independence | Platform integration |

| Data plane | P2P WireGuard | P2P WireGuard | P2P WireGuard | Edge-routed (Cloudflare) |

| Self-hostable | No (client only) | Yes (server) | Yes (everything) | No |

| Client | Tailscale | Tailscale (same) | Own client | Cloudflare One / Mesh Node |

| Free tier | 100 devices / 3 users | Unlimited | Unlimited (self-hosted) | 50 nodes / 50 users |

| License | Client: BSD; Server: proprietary | BSD | Apache 2.0 | Proprietary |

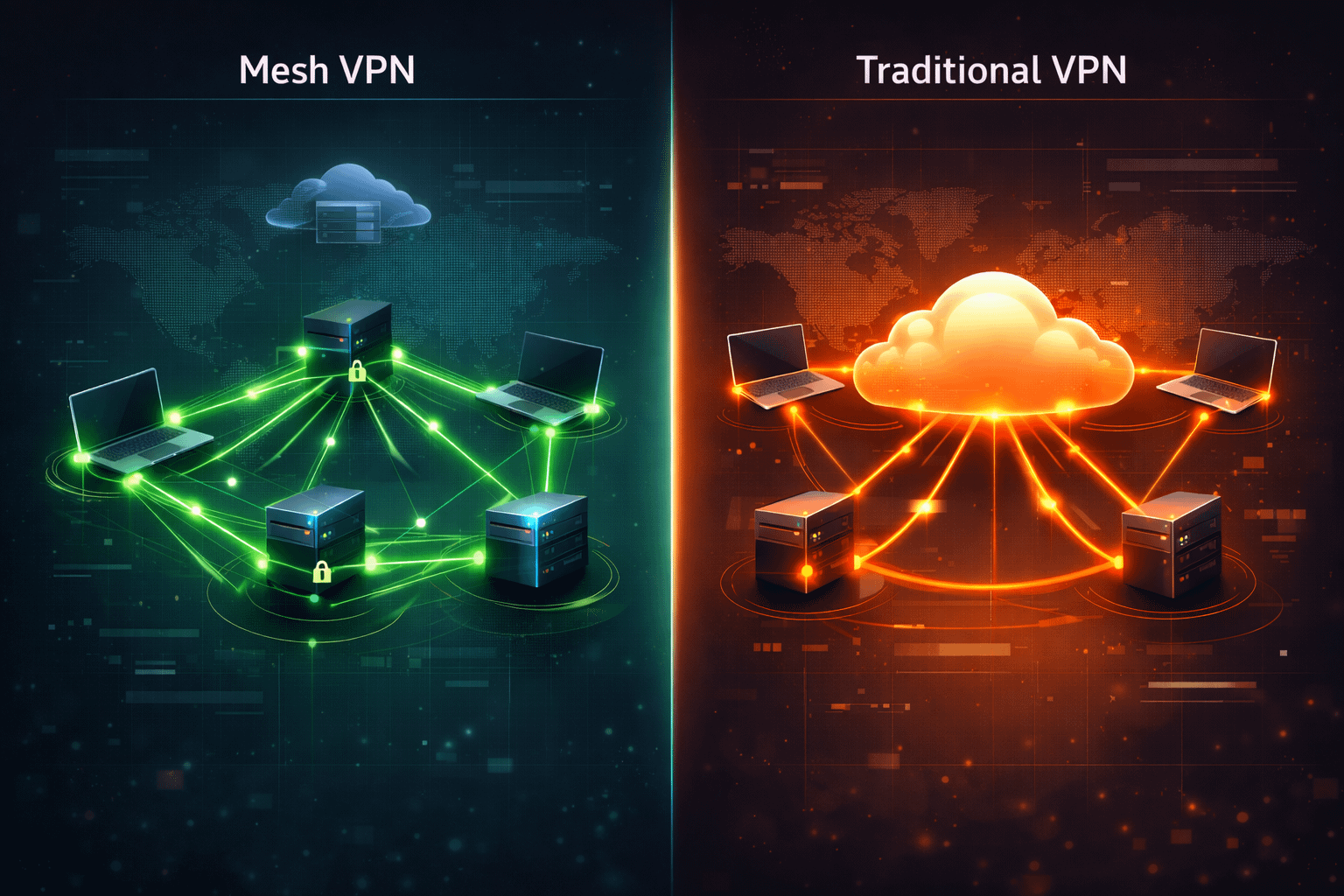

Architecture: How They Actually Work

All four create an overlay network that lets your devices communicate as if they were on the same LAN. The differences are in how they coordinate that network and where the traffic flows.

Tailscale and Headscale: The Coordination Model

Tailscale and Headscale share the same architecture because Headscale is literally a reimplementation of Tailscale’s server. The model works like this: a coordination server distributes WireGuard public keys, IP address assignments, and ACL policies to all nodes in your network. When node A wants to talk to node B, it gets B’s public key and endpoint information from the coordination server, then establishes a direct WireGuard tunnel. The actual traffic flows directly between the two nodes — peer-to-peer, encrypted, never touching the coordination server.

The coordination server’s role is purely administrative: it’s a phone book, not a post office. It tells nodes how to find each other, but it doesn’t carry their mail. This is a critical distinction for the security discussion later — the coordination server knows who is in your network and where they are, but it never sees what they’re saying to each other.

When direct peer-to-peer connections fail — symmetric NAT, strict corporate firewalls, mobile networks stuck behind carrier-grade NAT — traffic falls back to DERP relay servers. DERP (Designated Encrypted Relay for Packets) is Tailscale’s relay protocol. The relay forwards encrypted WireGuard packets between nodes that can’t reach each other directly. With Tailscale’s hosted service, these relays are shared public infrastructure distributed globally — free to use but with no performance guarantees. With Headscale, you can enable an embedded DERP server on the same box, keeping even fallback relay traffic on your own infrastructure.

The key difference between Tailscale and Headscale: Tailscale’s coordination server is proprietary and cloud-hosted. Headscale gives you the exact same architecture with the coordination server on your own box. Same clients, same protocol, same data plane — different trust model for the control plane.

NetBird: The Independent Stack

NetBird uses WireGuard for the data plane just like Tailscale, but everything else is built from scratch — own client, own server infrastructure, own relay system. The server side has three components instead of one:

The Management Server handles authentication, policy distribution, and peer coordination. Think of it as the equivalent of the Tailscale/Headscale coordination server, but with a built-in web dashboard and API-driven policy management instead of JSON config files.

The Signal Server handles WebRTC signaling for peer discovery — it’s what lets nodes find each other and negotiate direct connections. This is a separate concern from policy management, which is why it’s a separate service.

The TURN/Relay Server provides NAT traversal fallback, similar to Tailscale’s DERP but using the Coturn implementation (or since v0.29.0, a newer WebSocket-based relay). When peers can’t connect directly, traffic goes through here.

This three-component architecture is more complex to deploy and maintain than Headscale’s single binary. But it gives you complete independence from the Tailscale ecosystem — if Tailscale Inc. changes their client license, breaks API compatibility, or goes in a direction you don’t like, NetBird is entirely unaffected. You’re running a fully independent stack.

NetBird also supports kernel-level WireGuard on Linux by default (with userspace as fallback), which can give a performance edge on older hardware where Tailscale’s userspace optimizations haven’t caught up yet.

Cloudflare Mesh: The Edge Model

Cloudflare Mesh is architecturally different from the other three. There’s no peer-to-peer — every connection routes through Cloudflare’s global edge network across 330+ cities. This eliminates NAT traversal problems entirely (agents connect outbound to Cloudflare, no inbound ports needed), but it means all your private mesh traffic transits Cloudflare’s infrastructure. The underlying protocol isn’t publicly disclosed — they don’t confirm WireGuard.

Tailscale/Headscale/NetBird: your traffic goes directly between your devices (peer-to-peer WireGuard). Only coordination metadata touches the server.Cloudflare Mesh: all traffic routes through Cloudflare’s edge network. No peer-to-peer path exists.

Installation: Getting Started with Each

Let’s get practical. Here’s what it actually takes to set up each solution from scratch on Ubuntu/Debian servers — the kind of thing you’d do on a Hetzner VPS.

Tailscale

The easiest of the four. One command to add the repo, one to install, one to authenticate:

curl -fsSL https://tailscale.com/install.sh | sh

tailscale upA browser window opens, you sign in with your SSO provider (Google, GitHub, Microsoft, etc.), and the device joins your tailnet. Repeat on every device. That’s it — your nodes can now reach each other by Tailscale IP or MagicDNS name.

For a headless server, use an auth key instead:

# Generate auth key in Tailscale admin console first

tailscale up --authkey=tskey-auth-xxxxxTo advertise a subnet route or enable an exit node:

# Advertise a local network through this node

tailscale up --advertise-routes=192.168.1.0/24

# Use this node as an exit node (route all internet traffic through it)

tailscale up --advertise-exit-nodeThen approve the routes in the Tailscale admin console. ACL policies are managed through a HuJSON file in the admin dashboard — powerful but requires learning the syntax.

Total time: under 5 minutes per node. No server to deploy, no config files to write, no TLS certs to manage. The trade-off is clear: maximum convenience, zero control over the coordination infrastructure.

Headscale

You need a server with a public IP, a domain name pointing to it, and TLS certificates. Let’s set it up:

# Download the latest release

wget https://github.com/juanfont/headscale/releases/latest/download/headscale_linux_amd64 -O /usr/local/bin/headscale

chmod +x /usr/local/bin/headscale

# Create config directory and default config

mkdir -p /etc/headscale

headscale generate config > /etc/headscale/config.yamlEdit the config — the key settings:

# /etc/headscale/config.yaml (key sections)

server_url: https://hs.yourdomain.net:443

listen_addr: 0.0.0.0:443

tls_cert_path: /etc/letsencrypt/live/hs.yourdomain.net/fullchain.pem

tls_key_path: /etc/letsencrypt/live/hs.yourdomain.net/privkey.pem

# Custom DNS domain — one of the big wins

dns:

base_domain: mesh.yourdomain.net

magic_dns: true

nameservers:

global:

- 1.1.1.1

- 9.9.9.9

# Enable embedded DERP for self-hosted relay

derp:

server:

enabled: true

region_id: 999

stun_listen_addr: 0.0.0.0:3478Create a systemd service and start it:

cat > /etc/systemd/system/headscale.service << 'EOF'

[Unit]

Description=Headscale coordination server

After=network.target

[Service]

Type=simple

ExecStart=/usr/local/bin/headscale serve

Restart=on-failure

RestartSec=5s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable --now headscaleCreate a user (Headscale’s equivalent of a tailnet namespace):

headscale users create myuserNow on your client machines, install Tailscale as usual but point it at your Headscale server:

curl -fsSL https://tailscale.com/install.sh | sh

tailscale up --login-server https://hs.yourdomain.netTo enable ACLs, create a policy file:

# /etc/headscale/acl.yaml

acls:

- action: accept

src:

- "myuser"

dst:

- "myuser:*"

# Auto-approve routes and exit nodes

autoApprovers:

routes:

"192.168.1.0/24":

- "myuser"

exitNode:

- "myuser"Enable it in your config:

policy:

path: /etc/headscale/acl.yamlTo remove Tailscale’s public DERP servers and use only your own (for full sovereignty):

# In config.yaml

derp:

urls: [] # Remove default Tailscale DERP list

server:

enabled: true

region_id: 999

stun_listen_addr: 0.0.0.0:3478

auto_update_enabled: falseTotal time: 30–60 minutes including TLS cert setup. Ongoing maintenance: binary updates (check GitHub releases), Let’s Encrypt cert renewal (automate with certbot), and occasional config tweaks. The binary itself is tiny and resource-light — Headscale barely registers in htop alongside your other services.

Headscale needs a public IP. If your ISP uses Carrier-Grade NAT, you’ll need a VPS — which somewhat undermines the self-hosting argument since you’re then trusting a VPS provider. Though the VPS only sees encrypted WireGuard traffic and coordination data, which is a much narrower trust surface than Tailscale’s full view of your network.

NetBird

Self-hosted NetBird requires three server components. The quickest path is Docker Compose:

# Download the setup script

curl -fsSL https://github.com/netbirdio/netbird/releases/latest/download/getting-started-with-zitadel.sh -o setup.sh

chmod +x setup.sh

# Run setup — this configures Management, Signal, TURN, and Dashboard

./setup.shThe script walks you through configuring your domain, OIDC provider (Zitadel by default, or bring your own), and relay infrastructure. Under the hood it creates a docker-compose.yml with all the services:

# What gets deployed:

# - Management Server (HTTPS/gRPC) — handles auth, policy, coordination

# - Signal Server — WebRTC signaling for peer discovery

# - Coturn — TURN/STUN relay for NAT traversal

# - Dashboard — web UI for managing your network

# - Zitadel — OIDC identity provider (optional, can use external)On client machines, install the NetBird client:

curl -fsSL https://pkgs.netbird.io/install.sh | sh

netbird up --management-url https://netbird.yourdomain.netTotal time: several hours for initial setup, including OIDC configuration and relay server setup. The Docker Compose approach simplifies deployment but there’s still meaningful configuration involved.

Once running, the NetBird Dashboard gives you a web UI for managing peers, groups, and access policies — no JSON files to edit. Create groups like “servers” and “desktops”, then define rules:

# Example policy (configured via Dashboard UI or API):

# Allow "desktops" group to reach "servers" group on SSH and HTTPS

Source: desktops

Destination: servers

Protocol: TCP

Ports: 22, 443NetBird’s posture checks let you go further — deny mesh access if a device doesn’t meet requirements:

# Posture check examples (via Dashboard):

# - Minimum OS version: Ubuntu 22.04+

# - Required process running: crowdstrike-falcon

# - Block if: OS version < thresholdSince v0.65 (February 2026), NetBird includes a built-in reverse proxy for exposing internal services publicly — auto TLS via Let’s Encrypt, custom domains, path-based routing. This is the self-hosted answer to Tailscale Funnel that Headscale doesn’t have.

If self-hosting the full stack sounds like too much, NetBird offers a managed cloud option. Free tier available, paid plans from $5/user/month. You get the NetBird client and dashboard without deploying any server infrastructure. The self-hosted complexity is the main barrier — if you don’t need posture checks or the reverse proxy, Headscale is a much simpler self-hosting path.

Cloudflare Mesh

If you’re already a Cloudflare customer, this is the fastest path to a mesh network after Tailscale.

For servers (Linux VMs), deploy a Mesh Node — a lightweight headless agent:

# Install cloudflared if not already present

curl -fsSL https://pkg.cloudflare.com/cloudflared-ascii.pub | gpg --dearmor -o /usr/share/keyrings/cloudflared-archive-keyring.gpg

echo "deb [signed-by=/usr/share/keyrings/cloudflared-archive-keyring.gpg] https://pkg.cloudflare.com/cloudflared $(lsb_release -cs) main" | tee /etc/apt/sources.list.d/cloudflared.list

apt update && apt install cloudflared

# Register as a Mesh Node via Cloudflare Zero Trust dashboard

# or via CLI with a connector token from the dashboardFor desktops and mobile, install the Cloudflare One client (WARP) — available for macOS, Windows, iOS, Android. Enable the Mesh network in your Zero Trust dashboard, and enrolled devices can reach each other by their Mesh IPs.

Every enrolled device gets a private Mesh IP. Any participant can reach any other by IP — client-to-client, not just client-to-server. Subnet routing is supported via CIDR routes through Mesh nodes, with active-passive replicas for HA.

The Zero Trust integration is where Cloudflare Mesh shines for existing customers. Gateway policies you already have configured apply to Mesh traffic automatically. Device posture checks validate connecting devices. DNS filtering and traffic inspection are built in. If you’ve already invested time in Cloudflare Access rules, you don’t duplicate anything — it all just works across Mesh traffic too.

Cloudflare Mesh was announced on April 14, 2026 — days ago as of this writing. Mesh DNS (automatic hostname resolution like

postgres-staging.mesh) is still on the roadmap, not shipped. Docker container support is “expected later 2026.” Linux desktop client situation is unclear — Mesh Nodes are headless server connectors. The 50-node free tier cap is a hard limit. Expect rough edges and missing features for a while.Total time: 15–30 minutes if you already have a Cloudflare account. Zero ongoing server maintenance — Cloudflare handles everything. The trade-off: all your private mesh traffic routes through their infrastructure.

Installation Summary

| Tailscale | Headscale | NetBird | CF Mesh | |

|---|---|---|---|---|

| Setup time | 5 min | 30–60 min | 2–4 hours | 15–30 min |

| Server required | No | Yes (public IP) | Yes (public IP) | No |

| Components | Client only | 1 binary + client | 3+ services + client | Agent only |

| TLS certs needed | No | Yes | Yes | No |

| OIDC setup | Built-in SSO | Optional | Required | Via CF Access |

| Ongoing maintenance | None | Low (single binary) | Medium (3+ services) | None |

Feature Comparison

Here’s where the differences get concrete. I’ve mapped out the features that actually matter for a sysadmin running a homelab or small business infrastructure.

| Feature | Tailscale | Headscale | NetBird | CF Mesh |

|---|---|---|---|---|

| MagicDNS / Internal DNS | Yes (<tailnet>.ts.net) |

Yes (custom domain) | Yes (embedded resolver) | Planned (“Mesh DNS”) |

| Custom DNS domain | No | Yes | Yes (self-hosted) | TBD |

| Subnet routing | Yes | Yes | Yes (automated) | Yes (CIDR routes) |

| Exit nodes | Yes | Yes | Yes | Not mentioned |

| ACLs | HuJSON files | HuJSON (API) | UI-driven policies | CF Access/Gateway |

| SSO / OIDC | Yes | Yes | Yes | Via CF Access |

| Admin UI | Yes (polished) | Community only | Yes (built-in) | CF dashboard |

| Public service exposure | Funnel/Serve | No (planned) | Yes (reverse proxy v0.65+) | No |

| File sharing | Taildrop | Taildrop | No | No |

| SSH without keys | Yes (Tailscale SSH) | Yes (Tailscale SSH) | No | No |

| Posture checks | No | No | Yes (OS, processes, EDR) | Via CF device posture |

| SCIM provisioning | Enterprise only | No | Yes | Via CF Identity |

| HA routes/exit nodes | Premium ($18/user/mo) | Yes | Yes (all plans) | Yes |

| Network flow logs | Yes | No (planned) | Yes (self-hosted) | Via CF Gateway |

| Dynamic ACLs | Yes | No | Via group policies | Via CF Access |

| Multiple networks | Yes (tailnets) | No (single) | Yes | Yes (via CF One) |

| Relay infrastructure | Shared DERP (public) | Embedded DERP (own) | Coturn/WebSocket (own) | Cloudflare edge (always) |

A few things stand out. Headscale and NetBird both let you configure a custom DNS domain for your mesh — server.mesh.yourdomain.net instead of server.tail12ab.ts.net. That sounds minor until you’ve typed the wrong tailnet hash for the hundredth time in an SSH config. It’s one of those quality-of-life things that compounds across every script, bookmark, and muscle memory pattern.

NetBird’s posture checks are unique — the ability to deny mesh access based on device state (OS version, running processes, EDR status) is something neither Tailscale nor Headscale offer. If you’re in a regulated environment, this matters.

The Funnel/Serve gap is notable. Tailscale’s proprietary features for exposing services publicly don’t exist in Headscale. NetBird closes this gap with a built-in reverse proxy since v0.65 (February 2026) — auto TLS via Let’s Encrypt, custom domains, path-based routing. If you rely on Tailscale Funnel today, NetBird is the only self-hosted alternative that covers this without a separate Cloudflare Tunnel or nginx setup.

Cloudflare Mesh inherits the entire Cloudflare One security stack — Gateway policies, Access rules, device posture checks — which is either a massive advantage (if you’re already a Cloudflare customer) or irrelevant (if you’re not). But Mesh DNS for automatic hostname resolution is still on the roadmap, not shipped. For a product announced this week, that’s expected, but it means you’re working with IPs for now.

One more thing that surprised me looking at this matrix: HA routes and exit nodes. Tailscale gates this behind their Premium tier at $18/user/month. Both Headscale and NetBird include it for free, across all plans. If you need redundant exit nodes or failover routing — and for a business setup, you probably do — this is a real cost difference that adds up.

The admin UI situation is also worth noting. Tailscale has a polished web dashboard. NetBird has a built-in dashboard that’s functional and improving. Headscale has… community options. headscale-ui exists but doesn’t cover all features, and the primary interface is CLI. If you’re comfortable with headscale commands and don’t need a GUI, this is fine. If you’re managing the network with non-CLI people, it’s a pain point.

Finally, network flow logs — the ability to see what’s communicating with what across your mesh. Tailscale has this. NetBird has this (on your own infrastructure, which is great for auditing). Headscale doesn’t, and it’s only planned. If compliance or troubleshooting visibility matters to you, this is a gap.

One feature worth highlighting for SSH-heavy users: Tailscale SSH lets you SSH into nodes without managing SSH keys at all — authentication goes through Tailscale’s identity layer, so you just ssh user@node and it works. No authorized_keys files, no key rotation, no agent forwarding headaches. Headscale supports this too (same Tailscale client), which is a nice bonus if you’re migrating. NetBird and Cloudflare Mesh don’t have an equivalent — you manage SSH keys the traditional way.

Performance: The Numbers

This is where opinions meet data. NetBird published a detailed benchmark in April 2026 comparing all three P2P solutions against Cloudflare Mesh: Cloudflare Mesh vs NetBird vs Tailscale: Performance Compared (also available as a YouTube video). Real iperf3 tests across multiple regions and providers.

Test setup: NetBird 0.68.3, Tailscale 1.96.4, Cloudflare WARP 2026.3.846.0, iperf3 3.16 on Ubuntu 24.04 LTS. Both NetBird and Tailscale running in userspace mode for fair comparison.

One gap in these benchmarks: they only measure throughput (Mbps) and UDP packet loss — no latency, jitter, or RTT data. For interactive use cases like SSH, latency matters more than bandwidth. Given that Cloudflare Mesh adds two extra hops (client → edge → destination) compared to P2P’s direct tunnel, you’d expect higher latency on CF Mesh for regional connections. For intercontinental routes, Cloudflare’s optimized backbone might actually reduce latency compared to public internet routing. Until someone publishes proper latency benchmarks, treat the throughput numbers as only part of the performance picture.

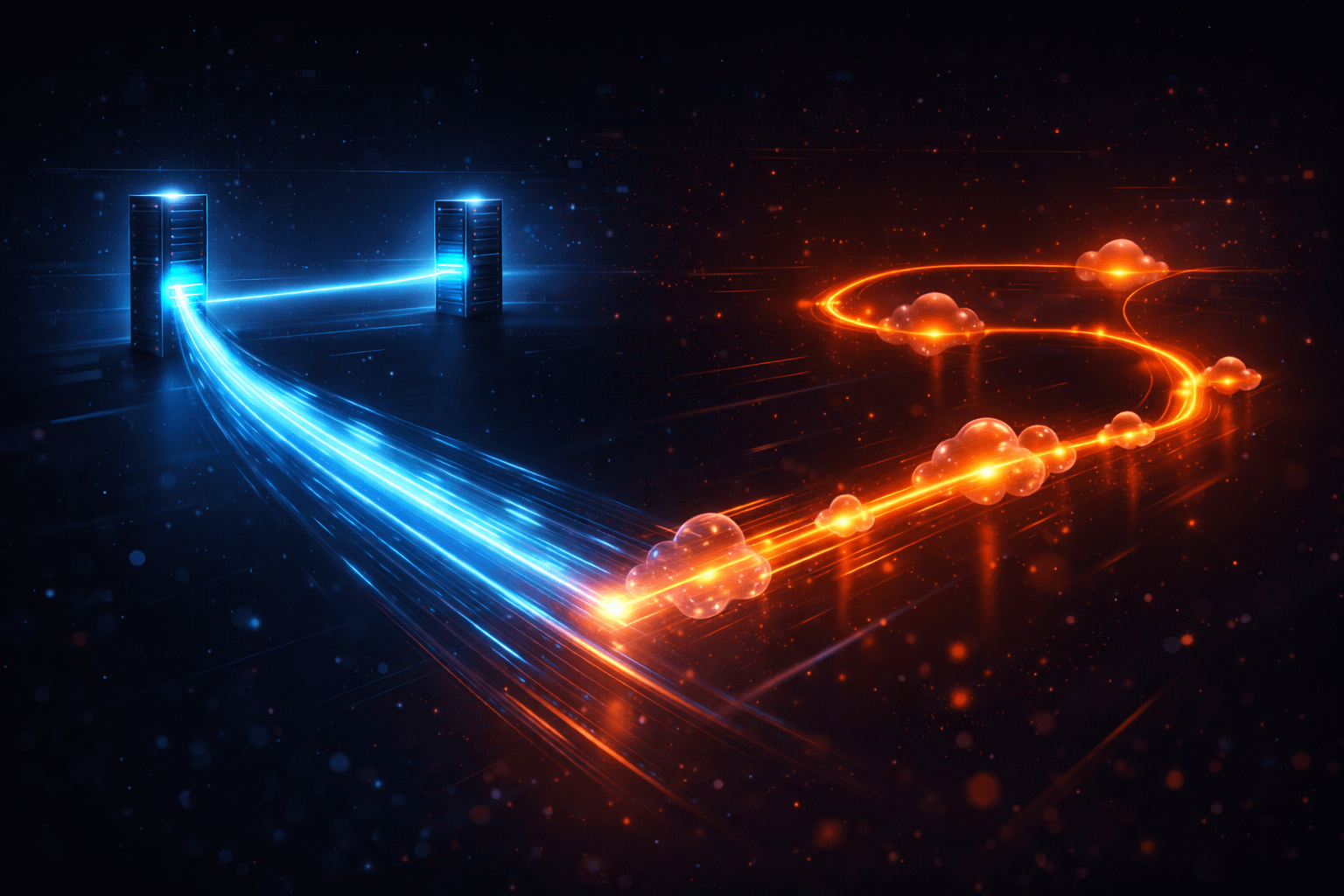

The headline: NetBird ≈ Tailscale, P2P >> Cloudflare (regionally)

NetBird and Tailscale perform “basically the same when it comes to network performance” — results fluctuate within normal network variation. Both use peer-to-peer WireGuard, so this makes sense. The real story is P2P versus edge-routed.

Since Headscale uses the exact same Tailscale clients and WireGuard data plane, its performance is identical to Tailscale for direct connections. The only difference is relay performance, where your own DERP server replaces Tailscale’s shared public relays.

European and regional routes: P2P dominates

| Route | NetBird | Tailscale | CF Mesh | Verdict |

|---|---|---|---|---|

| Hetzner DE → Hetzner DE | 1,260 / 1,260 Mbps | 1,300 / 1,220 Mbps | 250 / 290 Mbps | P2P ~5x faster |

| Helsinki → Germany | 810 / 746 Mbps | 842 / 750 Mbps | 249 / 349 Mbps | P2P ~2-3x faster |

| Hetzner → GCP EU-West3 | 1,410 / 1,220 Mbps | 1,380 / 1,390 Mbps | 273 / 387 Mbps | P2P ~3-5x faster |

| AWS US East → West | 358 / 423 Mbps | 347 / 339 Mbps | 162 / 300 Mbps | P2P wins |

| Berlin residential → Hetzner | 50 / 492 Mbps | 44 / 466 Mbps | 47 / 248 Mbps | P2P 2x on download |

(Format: upload / download)

For anyone running Hetzner-to-Hetzner — which includes my setup — P2P solutions deliver 1,200+ Mbps while Cloudflare Mesh tops out at 250–350 Mbps on the same routes. Not even close.

Intercontinental routes: Cloudflare’s backbone wins

| Route | NetBird | Tailscale | CF Mesh | Verdict |

|---|---|---|---|---|

| Japan → Berlin residential | 81 / 28 Mbps | 24 / 8 Mbps | 158 / 43 Mbps | CF ~1.5-2x faster |

| Japan → Hetzner Nuremberg | 48 / 32 Mbps | 47 / 21 Mbps | 224 / 269 Mbps | CF ~5-8x faster |

| Berlin → AWS US East | 39 / 186 Mbps | 37 / 181 Mbps | 44 / 287 Mbps | CF wins on download |

| Hetzner DE → AWS US East | 209 / 148 Mbps | 206 / 154 Mbps | 198 / 215 Mbps | Roughly even |

(Format: upload / download)

On long-distance international routes, Cloudflare’s optimized backbone genuinely outperforms P2P — sometimes dramatically. The Japan-to-Europe route shows 5-8x faster throughput through Cloudflare’s edge than direct WireGuard. Their network finds better paths than the public internet.

UDP: Where Cloudflare Mesh falls apart

This is the number that should make you pause. At 300 Mbps fixed rate, Hetzner Germany → AWS US West:

| Solution | Sent | Received | Packet Loss |

|---|---|---|---|

| NetBird | 300 Mbps | 295 Mbps | 1.2% |

| Cloudflare Mesh | 300 Mbps | 257 Mbps | 14% |

14% packet loss. If you’re running VoIP, video conferencing, gaming, or anything real-time through Cloudflare Mesh, you’re going to have a bad time. This is an inherent trade-off of edge-routed architecture — every packet takes two extra hops (to and from Cloudflare’s edge) compared to a direct WireGuard tunnel.

Relay performance: The hidden differentiator

Direct P2P connections perform identically across Tailscale, Headscale, and NetBird — they’re all WireGuard. The performance gap shows up when direct connections fail and traffic falls back to relays. This happens more than you’d think: symmetric NAT, strict corporate firewalls, mobile networks, double-NAT.

On Tailscale’s free tier, relay goes through shared public DERP servers with no throughput guarantees. Users regularly report dramatically slower speeds through DERP compared to direct connections. With Headscale’s embedded DERP or NetBird’s self-hosted TURN, your relay performance is bounded by your own server’s bandwidth — which for a decent Hetzner node is going to crush a congested shared relay.

This is one of the most tangible day-to-day improvements of self-hosting: not theoretical privacy benefits, but actual throughput when you need relay.

Performance implications for different use cases

Let’s cut through the numbers and talk about what actually matters for specific scenarios:

Server-to-server backups and replication: If you’re running ZFS send/recv, rsync, or database replication between Hetzner nodes, P2P WireGuard (Tailscale/Headscale/NetBird) is the clear winner. 1,200+ Mbps versus 250-350 Mbps through Cloudflare Mesh is not a marginal difference — it’s the difference between a 10-minute backup job and an hour-long one. Don’t route bulk data through Cloudflare Mesh if you have any other option.

SSH and web admin: Performance differences are completely irrelevant. An SSH session or web dashboard uses kilobits per second, not gigabits. Use whichever tool is most convenient to connect — the extra latency through Cloudflare’s edge is imperceptible for interactive use.

VoIP and video calls: Cloudflare Mesh’s 14% UDP packet loss is a non-starter. If you’re running any kind of real-time communication through your mesh, stick with P2P solutions. This is an inherent limitation of edge-routed architecture, not something likely to be “fixed” — every packet takes extra hops.

Accessing services from abroad: If you’re travelling in Asia and need to reach European servers, Cloudflare Mesh genuinely wins. Their backbone finds better intercontinental paths than the public internet. The 5-8x speed improvement on the Japan-to-Europe route is not a rounding error — it’s Cloudflare’s core competency showing.

Mobile on unreliable networks: This is where self-hosted relay matters most. If your phone is on hotel WiFi with strict NAT and can’t establish a direct WireGuard connection, the relay is all you have. Tailscale’s shared DERP might work or might be frustratingly slow. Your own Headscale DERP on a good server will give you consistent, fast relay. Cloudflare Mesh will also be consistent, since edge routing is always the path.

Worth noting: Tailscale has made major optimizations to their userspace WireGuard. On modern bare metal (i5-12400), they hit 13.0 Gbps — actually surpassing kernel WireGuard (11.8 Gbps). The old “Tailscale is slow because userspace” narrative is outdated. Source

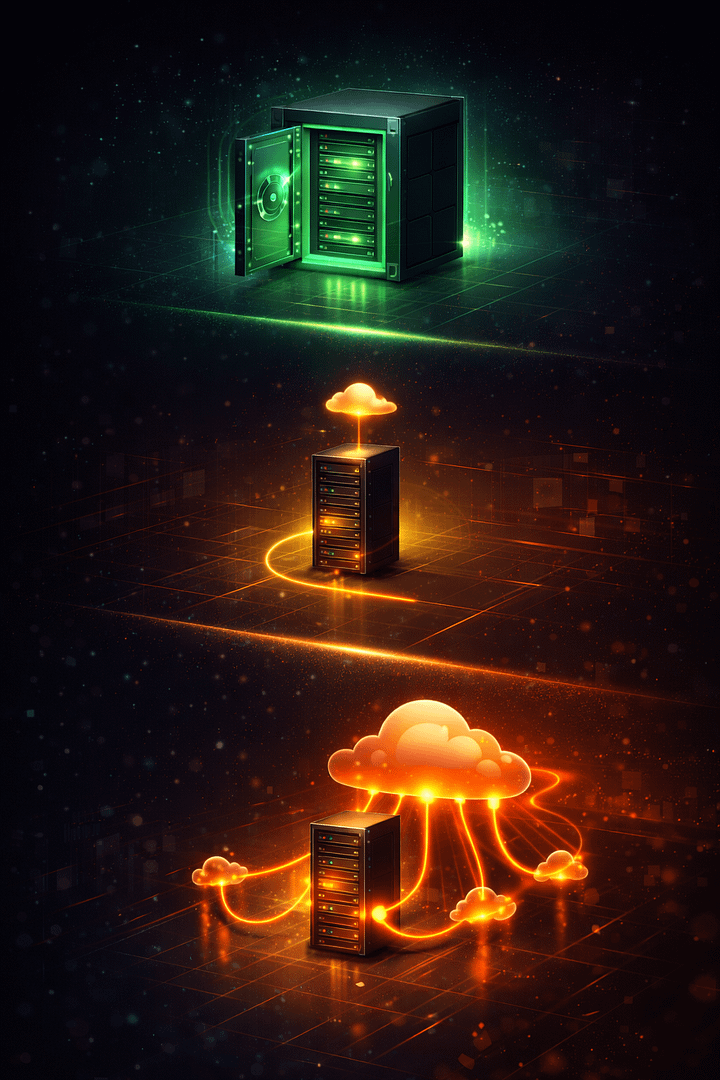

Security and Privacy: The Sovereignty Spectrum

This is the core question for a lot of people evaluating these tools: who sees what?

What the coordination server knows

Even with Tailscale’s hosted service, your actual traffic is encrypted end-to-end via WireGuard and never passes through their servers (except briefly via DERP relays when direct connections fail). But the coordination server necessarily knows:

- Every device in your network — public keys, hostnames, OS

- When each device connects and disconnects

- IP addresses of every node — public-facing IPs reveal physical location

- Network topology — which nodes exist and how they’re grouped

- ACL policies — your entire access control structure

For many users this metadata is benign. For others — regulated industries, privacy-conscious setups, strict data sovereignty requirements — this is sensitive information worth controlling.

The spectrum

These four tools sit on a clear sovereignty spectrum:

Level 1: Full sovereignty — Headscale with self-hosted DERP, or self-hosted NetBird. You control everything: coordination server, relay infrastructure, and (with NetBird) even the client. Network metadata never leaves your infrastructure. Even relay traffic stays on your boxes. This is the maximum-control option.

Level 2: Metadata exposure only — Tailscale. Traffic is peer-to-peer WireGuard, encrypted end-to-end. Only coordination metadata touches Tailscale’s servers. Tailscale’s position is that the coordination server is a low-trust component — it distributes public keys and policies, never private keys or traffic. This is architecturally true, but “low trust” isn’t “zero trust.”

Level 3: Full traffic through third party — Cloudflare Mesh. All traffic routes through Cloudflare’s edge. You’re not just exposing metadata — Cloudflare’s infrastructure is the transport layer for all your private network communication. Encrypted, yes, but passing through their network.

Self-hosted DERP: Closing the last gap

With Headscale, enabling the embedded DERP server and removing Tailscale’s public DERP servers from the config means even relay traffic never leaves your infrastructure. This is a frequently overlooked benefit: it’s not just coordination metadata that stays on your box, but relay path data too.

Key configuration details: embedded DERP is disabled by default — you must enable it. Tailscale’s public DERP servers are included as fallback by default — remove them for full sovereignty, but accept that your server becomes the only relay option. DERP access can be restricted to your tailnet members only.

The “already-in-Cloudflare” counterargument

The sovereignty concern with Cloudflare Mesh assumes they’re a new third party in your stack. But if you already run your public infrastructure through Cloudflare — DNS, CDN, WAF, DDoS protection, Cloudflare Tunnel — the trust decision is already made. All your WWW traffic already transits their network. Adding Mesh extends an existing trust boundary rather than creating a new one.

For these users, the practical benefits are real: unified trust boundary across public and private networking, shared security policies (Access rules, Gateway policies, device posture checks apply automatically), and secure server access without exposing SSH ports. Instead of having Cloudflare for public + Tailscale for private + possibly separate firewall rules for SSH access, everything consolidates into one provider, one set of policies, one dashboard.

What self-hosting doesn’t change

A few things stay the same regardless of whether you self-host:

WireGuard encryption is identical. The security of actual traffic is the same across Tailscale, Headscale, and NetBird — it’s WireGuard in all cases. Self-hosting doesn’t make the encryption stronger or weaker.

Client software trust. With Headscale, you’re still running Tailscale’s client code. You’ve replaced the server, not the client. With NetBird, you’re running their client — fully open source, but you’re still trusting code you probably haven’t audited line by line. The trust model shifts, it doesn’t disappear.

Operational security is on you. Self-hosting means you own the security hardening: firewall rules, updates, access controls, monitoring. A poorly secured Headscale server is worse than Tailscale’s hosted service, because you’ve added a point of failure without the team of security engineers Tailscale has maintaining theirs.

Cost Comparison

Let’s talk money. The pricing models are fundamentally different, and the right choice depends heavily on your scale.

| Tailscale | Headscale | NetBird | CF Mesh | |

|---|---|---|---|---|

| Free tier | 100 devices, 3 users | Unlimited (self-hosted) | Unlimited (self-hosted) | 50 nodes, 50 users |

| First paid tier | $6/user/month (Starter) | Free forever | $5/user/month (Team cloud) | TBD |

| HA routes/exit nodes | $18/user/month (Premium) | Free | Free (all plans) | Free |

| SCIM provisioning | Enterprise (custom pricing) | N/A | Team plan ($5/user/mo) | Via CF Identity |

| Hidden costs (self-hosted) | None | Server + domain + time | Server + domain + more time | None |

For a solo homelab with a handful of devices, all four are effectively free. The economics diverge at scale:

At 10 users / 50 devices: Tailscale Starter costs $60/month. Headscale is free (assuming you have a server). NetBird self-hosted is free; cloud is $50/month. Cloudflare Mesh is free under the 50-node cap.

At 50 users / 200 devices: Tailscale Starter jumps to $300/month. Headscale: still free. NetBird cloud: $250/month. Cloudflare Mesh: exceeds the free tier, paid pricing TBD.

If you need HA routes (and for business use, you probably do), Tailscale’s Premium tier at $18/user/month makes it expensive fast. Both Headscale and NetBird include HA for free.

The hidden cost of self-hosting is your time — setup, maintenance, troubleshooting. For a single sysadmin who already manages servers, this is marginal. For a team without dedicated ops, it’s a real factor. Tailscale’s pricing buys you freedom from operational burden. Whether that’s worth $6-18/user/month depends on how you value your time.

Practical Concerns

Self-hosting requirements

| Concern | Headscale | NetBird |

|---|---|---|

| Public IP needed | Yes | Yes |

| CGNAT compatible | No (need VPS) | No (need VPS) |

| Server components | 1 (single binary) | 3+ (management, signal, relay) |

| Initial setup time | ~30–60 min | Several hours |

| TLS certs required | Yes | Yes |

| OIDC provider needed | Optional | Required |

| Ongoing maintenance | Low (updates, backups) | Medium (3 services, relay infra) |

OPSEC: Don’t reveal your infrastructure

This applies to both Headscale and NetBird self-hosted: your coordination server needs a public DNS entry, which is visible to anyone. Don’t run it on the same server as your main public-facing services. If headscale.example.com resolves to the same IP as www.example.com, you’ve publicly announced “this IP is also my mesh coordination server” — painting a target and revealing your infrastructure topology.

Better: a dedicated VM with a hostname that doesn’t obviously tie back to your main domain. hs.unrelated-domain.net reveals nothing about what else you run. Someone scanning your web server shouldn’t learn it’s also the brain of your private mesh network.

Scale

Tailscale is battle-tested at massive scale. Headscale has known instability beyond ~300 nodes (CLI timeouts, unreliable pings) — fine for homelab and small business, but a ceiling to know about. NetBird’s scale limits aren’t well documented yet. Cloudflare Mesh caps at 50 nodes on the free tier.

Client configuration friction

Tailscale and Cloudflare Mesh: install → authenticate → done. Simple for anyone.

Headscale: install the regular Tailscale client, then set a custom control plane URL that’s buried in a debug menu on mobile. Fine for sysadmins, annoying if you’re managing devices for less technical family members.

NetBird: own client, own onboarding flow. Not harder, but different — and switching from an existing Tailscale deployment means replacing the client on every device rather than just changing a URL.

When Each Tool Makes Sense

Choose Tailscale when:

- Operational simplicity matters most — it just works

- You rely on Funnel or Serve for exposing services

- You need multiple tailnets or device sharing across organizations

- You’re behind CGNAT and don’t want a VPS

- You’re scaling beyond a few hundred devices

- Polished admin UI and documentation matter to you

Choose Headscale when:

- You want the lightest self-hosting lift — single binary, keep your existing Tailscale clients

- Data sovereignty with minimal operational overhead

- You have an existing server that can take on the role (free incremental cost)

- Custom MagicDNS domain matters (

server.mesh.yourdomain.net) - You want self-hosted DERP relay for both privacy and performance

- A single tailnet is fine for your needs

Choose NetBird when:

- Full self-hosting AND you want features Headscale lacks — dashboard, posture checks, reverse proxy, HA on free tier

- Regulated environments where posture checks and SCIM provisioning matter

- You want self-hosted Funnel/Serve equivalent (built-in reverse proxy since v0.65)

- Maximum independence from any single vendor, including Tailscale

- You’re starting fresh and don’t care about Tailscale client compatibility

Choose Cloudflare Mesh when:

- You’re already a Cloudflare customer — extends existing trust boundary

- Zero ops overhead — no servers, relays, or certs to maintain

- Quick access to servers without exposing ports

- Long-distance international routes where CF’s backbone excels

- You don’t need data sovereignty or already trust Cloudflare with your traffic

- Avoid if: UDP-sensitive workloads, regional high-throughput needs

Decision Framework: Choosing for Your Situation

Rather than picking a “winner,” here’s how to think about which tools fit your infrastructure. The decision depends on three things: what you already have, what you’re willing to maintain, and where you draw your trust boundaries.

If you’re starting from zero

Just start with Tailscale. Seriously. Get your mesh working, understand what you actually use it for, learn where it helps and where it frustrates you. Then evaluate alternatives from a position of experience, not theory. The free tier covers 100 devices and 3 users — more than enough to learn on.

If you’re running Tailscale and want to self-host

Headscale is the obvious path. Same clients, config-change migration, single binary to maintain. You lose Funnel/Serve and the admin UI, you gain custom DNS, own DERP, and full metadata control. If your Tailscale frustrations are about relay speed, DNS naming, or metadata — Headscale addresses all three.

If you also need posture checks, a built-in admin dashboard, SCIM provisioning, or a self-hosted Funnel/Serve equivalent — and you’re willing to accept the operational complexity of a three-component stack — NetBird is the more feature-complete self-hosted option.

If you’re already a Cloudflare customer

Cloudflare Mesh is worth trying as a secondary access path at minimum. It extends a trust boundary you already live inside, costs nothing within the 50-node free tier, and requires zero infrastructure. Whether it becomes your primary mesh depends on your throughput and UDP sensitivity requirements — the regional performance penalty and packet loss are real limitations.

If you have an underutilized server

This changes the economics entirely. The cost argument for managed services evaporates when the box is already paid for and maintained. Headscale’s incremental operational cost on an existing server is close to zero — it’s a single binary that barely shows up in htop. Self-hosting becomes the obvious choice when you’re not adding a monthly bill to do it.

If resilience matters

Run two. Any combination of the above gives you independent control planes with no shared failure modes. The hybrid approach — self-hosted primary plus managed backup — is something most comparison articles never consider, but it’s how experienced sysadmins actually build infrastructure.

Other Tools Worth Mentioning

One more name comes up in these conversations, but it solves a different problem:

Pangolin is a self-hosted tunneling tool using Traefik for reverse proxying. It’s geared toward selective service exposure rather than full mesh networking — more of a self-hosted Cloudflare Tunnel competitor. If you don’t need a full mesh VPN and just want to expose specific services, it’s worth a look. (Source)

My Scenario: What I’m Actually Going to Do

Theory is nice. Here’s my actual situation and what I’m planning.

I run two Hetzner nodes. One is my main web server — public-facing, all traffic already goes through Cloudflare (DNS, CDN, WAF, Tunnel). The other used to be my secondary DNS server, but DNS has moved to Cloudflare too. Now it mostly runs IRC, and I’m considering Matrix or Mattermost on it. It’s underutilized but already paid for and in my maintenance rotation.

I’m currently running Tailscale across everything. It works, but I’ve been bothered by three things: the relay speed on the free tier when direct connections fail (it can be atrocious), the fact that my MagicDNS names are Tailscale-assigned hashes, and the metadata exposure — Tailscale knows my full device inventory, connection patterns, and network topology.

My plan: both Headscale and Cloudflare Mesh.

Headscale on the Hetzner Cloud node

This is my server-to-server backbone. Zero additional cost — the box is already paid for. It adds Headscale alongside IRC/chat as an incremental workload, not a whole new server. I get full sovereignty over coordination and relay, custom MagicDNS domain, and DERP performance bounded by my own Hetzner bandwidth (which is excellent). The Headscale instance won’t be on the same hostname or IP as my main web server — OPSEC matters.

Since I’m already running Tailscale clients everywhere, switching to Headscale is a config change per device: point it at my new coordination server instead of Tailscale’s. No client reinstallation needed.

Cloudflare Mesh for desktop-to-server access

My daily workflow is SSH from my desktop to Hetzner nodes. For this, Cloudflare Mesh is perfect: install the Cloudflare One client on my desktop, enroll the servers as Mesh Nodes, done. No firewall rules to punch, no inbound ports to expose — agents connect outbound to Cloudflare’s edge. Since all my public traffic already flows through Cloudflare, this doesn’t add a new trust relationship.

More importantly, it’s my backup path. If I somehow get fail2banned from my own Headscale node (it happens), or the Hetzner VM goes down for maintenance, or I break something while setting up Mattermost — Cloudflare Mesh still gets my desktop to my servers through a completely independent control plane.

What I gain over current Tailscale

Spelling it out, because these are the specific things that bother me today and how each piece of the new setup addresses them:

Relay speed: Tailscale’s free DERP relays have been the single biggest pain point. When a direct connection fails — and it does, especially on mobile networks or behind hotel WiFi — the fallback relay speed drops off a cliff. With my own embedded DERP on the Headscale node, relay performance is bounded by my Hetzner connection, which is excellent. This alone justifies the switch.

Custom DNS domain: Instead of server.tail12ab.ts.net, I get server.mesh.mydomain.net. Every SSH config, every script, every bookmark becomes cleaner and consistent with my existing DNS. It’s the kind of thing that sounds trivial but compounds across hundreds of daily interactions.

Metadata sovereignty: My full device inventory, connection patterns, public IPs, and network topology stay on my infrastructure instead of Tailscale’s coordination server. Whether this matters depends on your threat model — for me, managing both personal and business infrastructure through the same mesh, it does.

Backup access path: If I lock myself out of my own Headscale node (it happens — fat-finger a firewall rule, fail2ban yourself, botch an update), Cloudflare Mesh gives me a completely independent way back in. This is something I don’t have today with Tailscale as my only mesh.

Zero additional cost: The ex-DNS Hetzner node is already in my budget. Headscale is free. Cloudflare Mesh’s free tier covers my needs easily. The total incremental cost of this migration is zero euros per month.

Why both?

Two independent mesh networks with zero shared failure modes. Headscale for the things that matter — server-to-server throughput, metadata sovereignty, custom DNS, fast relay. Cloudflare Mesh for the things where convenience matters — quick desktop access, no ports to manage, and a resilient backup path that doesn’t depend on anything I run.

If Cloudflare has an outage or changes their terms, my Headscale mesh keeps running. If my Headscale node goes down, Cloudflare Mesh still gets me to my servers. Neither depends on the other. That’s the kind of resilience you can’t get from going all-in on a single solution.

The migration path

The beauty of the Headscale approach is that migration from Tailscale is incremental, not a big-bang cutover. The steps:

- Set up Headscale on the ex-DNS Hetzner node. Get it running, configure the custom DNS domain, enable embedded DERP, set up ACLs.

- Generate pre-auth keys and start moving nodes one at a time:

tailscale up --login-server https://hs.mydomain.net --authkey=... - Test thoroughly with a couple of nodes before moving the rest. Existing Tailscale connections keep working on nodes you haven’t migrated yet — nothing breaks during the transition.

- Once everything is on Headscale, remove Tailscale’s public DERP servers from the config for full sovereignty.

- In parallel, enroll the same nodes in Cloudflare Mesh as a backup path. The two networks operate independently — no conflicts.

If something goes wrong, tailscale up without --login-server points the node back to Tailscale’s hosted service. The safety net is always there.

What I’m not doing, and why

I’m not going with NetBird, even though it has more features than Headscale. The three-component server stack is more complexity than I want to maintain for a hybrid homelab/business setup, and I don’t need posture checks or SCIM provisioning. If I were starting from scratch with no Tailscale clients deployed, or if I were in a regulated environment requiring device compliance enforcement, NetBird would be the stronger choice. For my use case — moving an existing Tailscale deployment to self-hosted with minimal disruption — Headscale’s single binary and Tailscale client compatibility is the path of least resistance.

I’m also not going all-in on Cloudflare Mesh as my primary network. The performance data is clear: for Hetzner-to-Hetzner traffic (my bread and butter), P2P WireGuard is 3-5x faster. And 14% UDP packet loss rules out Cloudflare Mesh for anything latency-sensitive. But as a secondary access path that costs zero infrastructure and lives in a trust boundary I’m already inside? Perfect.

The bottom line

Most comparison articles assume you’re picking one tool. In practice, sysadmins layer tools based on trust boundaries and existing infrastructure. The question isn’t “which mesh VPN is best?” — it’s “where are my trust boundaries and what do I already have?”

If you already have a box sitting around, Headscale is practically free to add. If you’re already a Cloudflare customer, Mesh is practically free to try. If you need maximum independence, NetBird gives you everything self-hosted at the cost of more ops. And if none of this matters to you and you just want things to work, Tailscale remains excellent at what it does.

You probably don’t need to choose one. You need to decide what matters to you — sovereignty, convenience, performance, resilience — and pick the right tool for each layer. The tools are getting good enough that the “just pick one” era is over. Mix, match, and build something that reflects how you actually think about your infrastructure.

The mesh VPN space in 2026 is genuinely good. Tailscale proved the concept and made it mainstream. Headscale proved you could self-host the control plane without losing the client experience. NetBird proved you could build a fully independent stack with enterprise features. And now Cloudflare is proving that edge-routed mesh has a place alongside peer-to-peer, especially for users already in their ecosystem.

Competition is making all of these better. Tailscale’s userspace performance improvements came from being pushed by alternatives. NetBird’s reverse proxy feature directly addresses Tailscale Funnel’s proprietary lock-in. Cloudflare entering the space forces everyone to think about pricing and convenience more seriously. Whatever you choose, you’re choosing well — the floor has risen dramatically, and the differences are increasingly about philosophy and fit rather than basic capability.

I’ll write a follow-up once I’ve actually migrated and lived with the Headscale + Cloudflare Mesh setup for a few weeks. Theory is cheap; experience is what matters.

Sources and Further Reading

- Cloudflare Mesh vs NetBird vs Tailscale: Performance Compared (NetBird) — also as YouTube video

- Introducing Cloudflare Mesh (Cloudflare blog, April 2026)

- Headscale Features

- NetBird Documentation

- Surpassing 10Gb/s with Tailscale (Tailscale blog)

- Tailscale vs NetBird vs Headscale: Mesh VPN 2026 (PkgPulse)

- Tailscale vs Pangolin vs Headscale (Medium)

- I switched to Headscale instead of Tailscale, but most people probably shouldn’t (XDA)

- Cloudflare Mesh Documentation

- Headscale GitHub

- NetBird GitHub

- awesome-tunneling — comprehensive list of tunneling solutions